I looked up the definition of “culture” – here are a few definitions:

- the quality in a person or society that arises from a concern for what is regarded as excellent in arts, letters, manners,scholarly pursuits, etc.

- that which is excellent in the arts, manners, etc.

- the behaviors and beliefs characteristic of a particular social, ethnic, or age group: the youth culture; the drug culture.

Note that culture is the manifestation of intellectual achievement. It’s the evidence and result of achievement. I think the 3rd definition is most appropriate for DevOps – what are the behaviors that are characteristic of a well integrated Development & Operations organization?

The challenge, the discussion, was how we can re-balance the scales and get the word out that this is actually about culture and that tools happen as a result of culture, not the other way around. This post begins my contribution to that effort.

The question was asked – do we all agree that culture is the most important thing when it comes to creating a successful business? The short answer is “yes”. If you wanted to hear all the if/and/but/what-if/etc discussions, you should have come to Devopsdays. For the sake of this blog post – culture is the most important factor. If you want case studies and analysis that proves that culture matters – read Jim Collins Good to Great.

My present company has a really excellent culture of Developer / Ops cooperation and collaboration. I wasn’t there when it wasn’t that way (if ever) and so I can’t tell a story about how to change your organization. What I can tell you is what a healthy and thriving Dev/Ops practicing organization looks like and what I think some of the key factors in that success are. I see this as two components – there are fundamental core values that enable and support the culture, and then there are tactical things that are done to make the culture work for us. I’d like to talk about both. The culture is the result of these actions and ideas put into practice.

Background

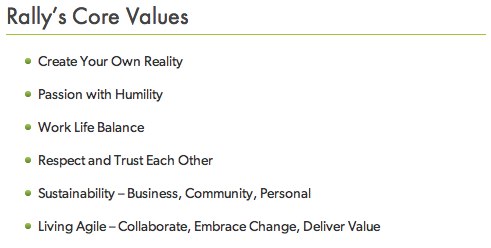

I work for a company with a well defined set of core values. Those values set forth parameters under which the culture exists. Here’s what they are:

These values are public and they matter – they matter a lot.

These might sound hokey to you – but every single one of them is held high at the company & strongly defended. Defending a list of values like this is hard sometimes. When someone doesn’t show respect to others, how do you uphold that core value? When someone’s idea of “work life balance” is different than another person, how do you support both of them? When creating your own reality means you don’t want to work for Rally anymore – what do you do?

I’m proud to say that in Rally’s case – they are generally true to the core values. Putting “Create your own reality” on a list of core values doesn’t create culture – what creates culture is having repeated examples where individuals have followed their passion & the company has supported them. This support doesn’t just mean they have permission, it means the company uses whatever resources it can to help. Sometimes this means using your resources to help someone find another job. Sometimes this means helping them get an education they can use at another company. Usually though, it means getting them into a role where they can do their best work. Whatever the case – Rally’s culture is to always be true to that core value and do whatever they can to support an employee in creating their own reality.

This is repeated for all of the core values. By being explicit & public about these values they set the stage for what an employee can expect from Rally as a workplace. But there’s more to it – you have to make sure these core values are upheld and you have to make sure they thrive – and this is where some of the tactical parts come in.

What are the tactical things?

- Collaboration – at Rally collaboration is a requirement. Development is done almost exclusively in pairs, planning is done as groups, retrospectives are done regularly and the actions from those retrospectives are announced publicly and followed up on. Architecture decisions are reviewed by a group comprised of Developers, Operations and Product.

- Self Formed Teams – teams are largely formed of individuals who have an interest in that teams work. When we need a task force, an email will go out to the organization looking for people interested in participating & those teams self-form. This also gives anyone in the company the ability to participate in areas of the business they may never otherwise get exposure to.

- Servant Leadership – Leaders at Rally often do very similar work to everyone else – they just have the additional responsibility of enabling their teams. Decisions about how to do things don’t often come from managers, they come from the teams.

- Data Driven Decisions – Not strictly associated with a core value, I think this is one of the most important aspects of the Rally culture. There is an expectation that we establish evidence that a decision is correct. Sometimes this evidence is established before any dev work is done but sometimes this data comes from dark launching a new service or testing out some new piece of software. Either way, it’s understood that the job isn’t really done until you have data to support why a particular decision is right & have talked to the broader group about it.

There are plenty of other things here and there but you get the general idea. We talk a lot & tell each other what we’re doing, we enlist passionate individuals in areas they have interest, we embrace & seek out change and we empower individuals to drive change by working with others.

So what? What does that have to do with Devops?

Everything

2.5 years ago the company had some performance & stability problems. Technical debt had caught up with them and the only real way to fix the problem was to completely change the way the company did development & prioritized their work. The good news is that they did it, but it was made possible by the fact that individuals were empowered to drive that change. Almost overnight, two teams were formed to focus on architectural issues. A council was formed to prioritize architectural work. The things we all complain about never being able to prioritize became a priority and remain a priority to a degree I’ve never experienced before at other companies. Prioritizing this work is defended and advocated by the development teams – something only possible because of the collaborative environment in which we operate.

I have been personally involved in two services that literally started out as a skeleton of an app when they went into production. The goal was to lay the groundwork to allow fast production deployments, get monitoring in place & enable visibility while the system was being developed. This was all done because the developers understand the value of these things, but they don’t know exactly how to build it – they need Ops help. Having tight Ops and Dev collaboration on these projects has made them examples of what works in our organization. These projects become examples for other teams in the company and they push the envelope on new tech. These two projects have:

- Implemented a completely new monitoring framework that allows developers to add any metric they want to the system

- Implemented Continuous deployment

- Established an example of how and why to Dark Launch a service

I’m sure the list will continue to go on… it’s fantastic stuff.

The Rub – culture isn’t much of anything without people who embrace it.

Along with a responsibility for pushing change from the bottom up in Rally comes responsibility for defending culture – or changing it. This means that when you hire people, they have to align with your core values – they have to be willing to defend that culture or the company as a whole needs to shift culture. All those core values and tactical things will not maintain a culture that the team members do not support. Rally’s culture is what it is because everyone takes it seriously and that includes taking it seriously when there’s a problem that needs fixing.

This has happened. There are core values that used to be on that list above but they aren’t anymore. At one point or another things changed and those core values were eroding at other core values. This takes time to surface, it takes time to collect data to show it’s true, but when the teams start to observe this trend they have to take action. This isn’t the job of management alone – this is the job of every member of the company. When the voice begins to develop asking for change – you need a culture that allows that change to take place and for everyone to agree on the new shape things take.

That said, it also isn’t possible if management doesn’t support those same core values. Management has the same responsibility to take those core values seriously.

DevOps is our little corner of a much bigger idea

There’s a problem that we’re trying to fix – we’re trying to improve the happiness of people, the quality of software, and the general health of our industry. Our industry is totally healthy when you look at the bottom line, but we’re looking for something more. We want a happy and healthy development organization (including Ops, because Ops is part of the Development organization), but we also want our other teams to be part of that. As Ops folks and Developers, we can clean up our side of the street – we can do better. We seek to set an example for the rest of the organization.

For culture to really improve in companies it has to go beyond Dev and Ops into Executives, Product, Support, Marketing, Sales and everyone else. You ALL own quality by building a healthy substrate (culture) on top of which all else evolves.

But in the end it’s about culture. It’s really only about culture for now – because when you get culture right the other problems are easy to solve.

Congratulations to those of you who read this far – shoot me a note and let me know you read this far because you probably share the same passion about this that I do. Also – putting up blog posts from 32,000 feet is awesome – thanks Southwest.

]]>